Fetch real-time data from 100+ websites,No development or maintenance required.

Over 100 million real residential IPs from genuine users across 190+ countries.

SCRAPING SOLUTIONS

Get accurate and in real-time results sourced from Google, Bing, and more.

With 120+ prebuilt and custom scrapers ready for any use case.

No blocks, no CAPTCHAs—unlock websites seamlessly at scale.

Execute scripts in stealth browsers with full rendering and automation

PROXY INFRASTRUCTURE

Over 100 million real residential IPs from genuine users across 190+ countries.

Reliable mobile data extraction, powered by real 4G/5G mobile IPs.

For time-sensitive tasks, utilize residential IPs with unlimited bandwidth.

Fast and cost-efficient IPs optimized for large-scale scraping.

SCRAPING SOLUTIONS

PROXY INFRASTRUCTURE

DATA FEEDS

Full details on all features, parameters, and integrations, with code samples in every major language.

LEARNING HUB

ALL LOCATIONS Proxy Locations

TOOLS

RESELLER

Get up to 50%

Contact sales:partner@thordata.com

Products $/GB

Fetch real-time data from 100+ websites,No development or maintenance required.

Get real-time results from search engines. Only pay for successful responses.

Execute scripts in stealth browsers with full rendering and automation.

Bid farewell to CAPTCHAs and anti-scraping, scrape public sites effortlessly.

Dataset Marketplace Pre-collected data from 100+ domains.

Over 100 million real residential IPs from genuine users across 190+ countries.

Reliable mobile data extraction, powered by real 4G/5G mobile IPs.

For time-sensitive tasks, utilize residential IPs with unlimited bandwidth.

Fast and cost-efficient IPs optimized for large-scale scraping.

Data for AI $/GB

Pricing $0/GB

Docs $/GB

Full details on all features, parameters, and integrations, with code samples in every major language.

Resource $/GB

EN $/GB

产品 $/GB

AI数据 $/GB

定价 $0/GB

产品文档 $/GB

资源 $/GB

简体中文 $/GB

Blog

Residential Proxies

The residential proxy market in 2026 offers more choice than ever. Some providers focus on enterprise-grade infrastructure, compliance workflows, and managed tooling. Others compete on self-serve access, lower pricing, and flexible plans for fast-moving teams.

For companies working on public web data collection, price monitoring, ad verification, localized content research, and other data-intensive workflows, the best provider is not always the biggest or the most expensive. The right choice depends on your traffic volume, targeting needs, integration requirements, and budget.

This guide reviews five residential proxy providers based on pricing structure, IP pool scale, geographic coverage, targeting options, and product fit for different types of teams.

Before comparing providers, it helps to define the criteria that matter most in practice.

A large IP pool can help distribute requests more broadly, but raw IP count is only part of the picture. Geographic coverage, ISP diversity, and network quality all affect how useful that pool is in real workflows.

Country-level targeting is now table stakes. For teams collecting localized data, city-level and ASN-level targeting can be important when region-specific content, pricing, or search visibility matters.

Different workflows need different session behavior. Some use cases benefit from rotating sessions, while others depend on session consistency over a longer period. A good provider should support both without unnecessary complexity.

Clear per-GB pricing, understandable plan structure, and a straightforward trial option make it easier to evaluate actual cost against operational needs.

Most teams want infrastructure that works with standard tools and frameworks. Dashboard clarity, authentication options, and onboarding friction all shape how quickly a provider becomes useful.

Best for: Enterprise teams with advanced compliance, procurement, and managed tooling requirements

Residential proxy pricing: from $4.20/GB PAYG, with lower rates on larger commitments

IP pool: 400M+ residential IPs

Coverage: 195+ countries

Targeting: Country / City / ASN / ZIP code

Sticky sessions: up to 30 minutes

Protocols: HTTP/HTTPS + SOCKS5

Trial: Limited, depending on plan and verification

Bright Data is one of the largest and most established names in the proxy market. Its residential network is backed by a broad product ecosystem that includes proxy management tools, APIs, and additional data infrastructure services.

For enterprise teams, Bright Data’s appeal often comes from scale, product breadth, and compliance-oriented positioning. Organizations with more formal internal review, procurement, or governance requirements may value that maturity.

Good fit for:

Things to consider:

Best for: Teams that value premium infrastructure and developer-focused tooling

Residential proxy pricing: from $2.50/GB on volume plans

IP pool: 175M+ residential IPs

Coverage: 195+ countries

Targeting: Country / City / ASN / Carrier

Sticky sessions: up to 30 minutes

Protocols: HTTP/HTTPS + SOCKS5

Oxylabs has built a strong reputation around performance, developer experience, and enterprise-grade web data tooling. Its residential proxy offering is supported by a broader platform that includes APIs and workflow-oriented data products.

For organizations that want a polished infrastructure experience and may also benefit from adjacent data collection tools, Oxylabs is a strong option to evaluate.

Good fit for:

Things to consider:

Best for: Mid-market teams that want a balance between usability and price

Residential proxy pricing: from $2.20/GB

IP pool: 55M+ residential IPs

Coverage: 195+ countries

Targeting: Country / City / ASN

Sticky sessions: up to 30 minutes

Protocols: HTTP/HTTPS + SOCKS5

Trial: 3-day money-back guarantee

Decodo is positioned as a practical middle-ground option. It offers a more accessible entry point than some enterprise-focused platforms while still delivering the targeting and protocol support many teams expect.

For growing teams that have moved beyond entry-level tools but do not need a heavy enterprise stack, Decodo can be a reasonable fit.

Good fit for:

Things to consider:

Best for: Low-volume users and teams that want simple pay-as-you-go access

Residential proxy pricing: from $1.75/GB

IP pool: 32M+ residential IPs

Coverage: 195+ countries

Targeting: Country / City / ASN

Sticky sessions: up to 24 hours

Protocols: HTTP/HTTPS + SOCKS5

Trial: Pay-as-you-go, no minimum

IPRoyal is often attractive to smaller teams, solo developers, and lower-volume users because of its straightforward pay-as-you-go structure and non-expiring traffic model.

That flexibility can make it a convenient option for intermittent projects or testing new workflows without committing to a larger plan.

Good fit for:

Things to consider:

Best for: Cost-conscious teams that need flexible residential proxy infrastructure at scale

Residential proxy pricing: from $0.65/GB

Unlimited plan: from $38/day

IP pool: 100M+ residential IPs

Coverage: 190+ countries

Targeting: Country / State / City / ASN

Sticky sessions: up to 90 minutes

Protocols: HTTP/HTTPS

Trial: Free trial available

Thordata stands out on pricing while still offering the features most teams expect from a modern residential proxy platform. Its network covers 100M+ residential IPs across 190+ countries, with city and ASN-level targeting available through self-serve plans.

The product lineup supports a wide range of workflows, including rotating residential proxies, unlimited bandwidth plans for higher-volume operations, mobile proxies, and SERP-focused products. For teams that want a single provider across multiple proxy use cases, that breadth can simplify vendor selection.

Thordata is especially attractive for teams where per-GB efficiency matters. The unlimited residential plan also gives higher-volume users an alternative to bandwidth-based pricing when usage becomes less predictable.

Good fit for:

Things to consider:

| Provider | Residential Price | IP Pool | Countries | City Targeting | Unlimited Plan | Trial |

|---|---|---|---|---|---|---|

| Thordata | $0.65/GB | 100M+ | 190+ | Yes | $38/day | Free trial |

| Bright Data | From $4.20/GB | 400M+ | 195+ | Yes | No | Limited |

| Oxylabs | From $2.50/GB | 175M+ | 195+ | Yes | No | Limited |

| Decodo | $2.20/GB | 55M+ | 195+ | Yes | No | 3-day guarantee |

| IPRoyal | $1.75/GB | 32M+ | 195+ | Yes | No | PAYG |

The best provider depends on your operational profile.

If your team prioritizes cost efficiency and flexible scaling, Thordata is a strong option to evaluate.

If your organization values enterprise processes, compliance workflows, and broad managed tooling, Bright Data may be the better fit.

If you want premium infrastructure with a developer-oriented product experience, Oxylabs is worth considering.

If you need a mid-market balance between usability and price, Decodo offers a practical middle path.

If your usage is occasional or low-volume, IPRoyal may be the simplest entry point.

Focus on IP pool quality, geographic coverage, targeting precision, session options, pricing transparency, and compatibility with your existing tools.

Not necessarily. Lower pricing can reflect a more self-serve model rather than weaker infrastructure. The important question is whether the provider fits your workflow and quality requirements.

If your work depends on localized content, regional pricing, or location-sensitive search visibility, city-level targeting can be valuable.

Unlimited plans are often worth considering when your daily bandwidth usage is high or unpredictable and you want more predictable cost control.

Smaller teams often prefer providers with simple onboarding, transparent pricing, and self-serve setup. The best fit depends on whether you prioritize low entry cost, flexible billing, or broader feature coverage.

Enterprise teams typically prioritize scale, process maturity, governance, and managed tooling. Providers with a more established enterprise product model may be a better fit in those cases.

The residential proxy market in 2026 offers strong options across multiple price points and operating models. The best choice depends less on brand recognition and more on how well a provider aligns with your workflow, data requirements, and budget.

For teams looking for cost-efficient residential proxy infrastructure with broad geographic coverage and flexible plan options, Thordata is a compelling option to consider. For teams with more formal enterprise requirements, providers such as Bright Data and Oxylabs may be a better fit. And for smaller or more occasional use cases, Decodo and IPRoyal remain relevant alternatives.

Choose based on use case, validate with a trial where possible, and compare the actual operating model, not just the headline price.

Looking for

Top-Tier Residential Proxies?

Looking for

Top-Tier Residential Proxies? 您在寻找顶级高质量的住宅代理吗?

您在寻找顶级高质量的住宅代理吗?

SERP Data Collection in 2026: When to Use a SERP API vs Managing Your Own Proxies

Compare SERP API vs residentia ...

Jenny Avery

2026-06-10

New Trends in Web Data Scraping in 2026: How Does Thordata’s Residential Proxy Solve the Anonymity and Stability Challenges?

When data becomes the oil of the new era, the scraping […]

Unknown

2026-06-10

Residential Proxies for AI Data Collection: A Practical Setup Guide

A practical guide to residenti ...

Jenny Avery

2026-06-10

Thordata Residential Proxy: The Ultimate Guide to AI-Powered Data Collection and Web Scraping Success

I’ll write a comprehensive, SE ...

Xyla Huxley

2026-06-10

How to Manage Multiple Social Media Accounts Without Getting Banned

Learn how to manage multiple F ...

Xyla Huxley

2026-06-10

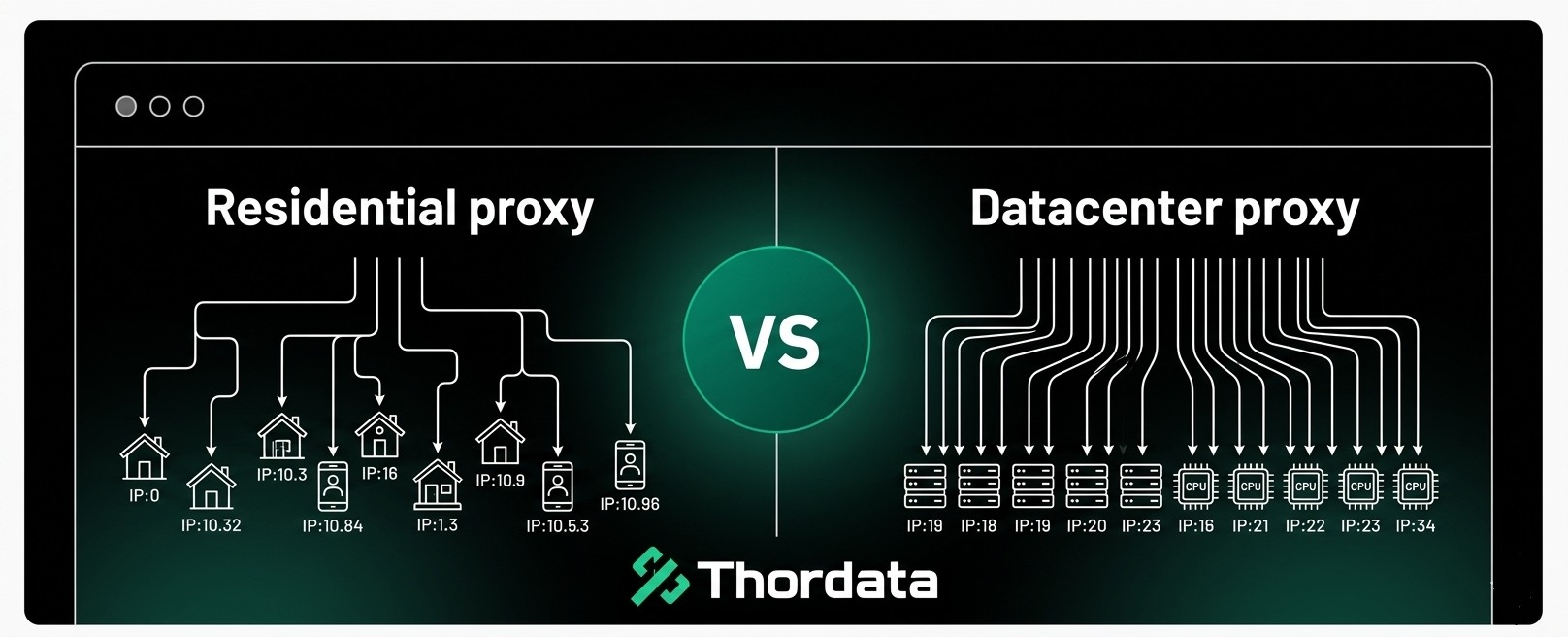

Residential Proxy vs Datacenter Proxy: Which Is the Best Proxy for Web Scraping?

Compare residential proxies an ...

Jenny Avery

2026-06-09

5 Best Fingerprint Browsers for 2026

Fingerprint browsers have beco ...

Xyla Huxley

2026-06-09

Unlimited Residential Proxies: When They Make Sense and How to Choose the Right Plan

Unlimited residential proxies ...

Xyla Huxley

2026-06-08

Residential Proxies for Web Scraping

In this article, we will look ...

Xyla Huxley

2026-06-08